Why Article Forge doesn't use GPT-3 and why everyone is thinking about content generation the wrong way.

Since being released in 2020, GPT-3 has become synonymous with artificial intelligence and text generation. Countless research papers have been released analyzing its capabilities, ranging from its ability to problem solve, answer questions, tell jokes, and more. GPT-3 has been featured in every major news organization under the sun, and it seems a startup built around GPT-3 emerges every other day.

For the customers of these startups, using GPT-3 to write text has become almost synonymous with using AI to write text.

Ultimately, OpenAI, the creators of GPT-3, have created the best marketed AI model1 of all time.

But the stratospheric rise of GPT-3 has stifled better approaches. Two years might not seem like a long time, but in the fast moving field of artificial intelligence, that might as well be a decade.

While AI researchers continue to devise even more impressive ways of writing content, end users of AI powered tools are stuck using outdated technology.

Where is GPT-3 Strong?

Now with that being said, GPT-3 does have several strengths - the biggest one being its flexibility. GPT-3 was trained on a large portion of the internet, and the internet is very diverse. People write all sorts of things: Pop culture stories, persuasive ad copy, educational material on preparing for the MCAT, trivia about US history, philosophical discussions on artificial intelligence, and everything in between.

By training on all of this data, and due to its very large size, GPT-3 has learned to do many of these tasks without requiring extra engineering, research, or training. This makes GPT-3 a great swiss army knife for prototyping new ideas - especially if you lack expensive GPU resources or machine learning research expertise.

Some different applications for this could include "taking a positive review and making it into a negative one," "write marketing copy about a product with these five features," or "take all of this data and turn it into a two sentence summary." A single model that can do all that with no coding required is quite powerful!

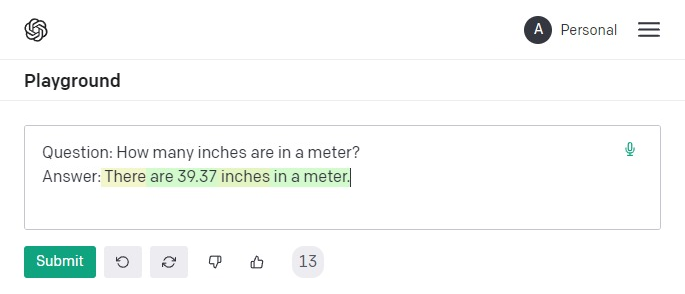

Another great advantage of large language models like GPT-3 is that they have memorized a lot of information. If you ask GPT-3, "How many inches are in a meter?" it will correctly answer "39.37".

When combining these two features together, you have something that can, with almost no engineering effort required, write generally decent content that is mostly accurate.

The Downsides of Being "Mostly Accurate"

I said above that GPT-3 was trained on the internet, and while reading the internet, it memorized quite a lot of the information it read. This is very useful in some cases, but this can also be quite disastrous in others.

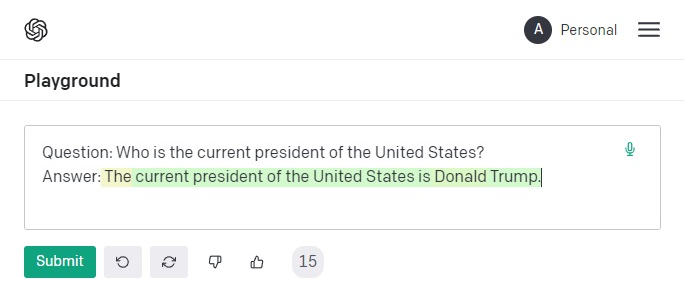

If you ask GPT-3 who the current president of the United States is, you will receive back "Donald Trump" - who has not been the president since 2021.

The problem is that GPT-3 was trained once and memorized everything during that training. However, since then, the world has changed, and GPT-3's knowledge has grown stale and out of date.

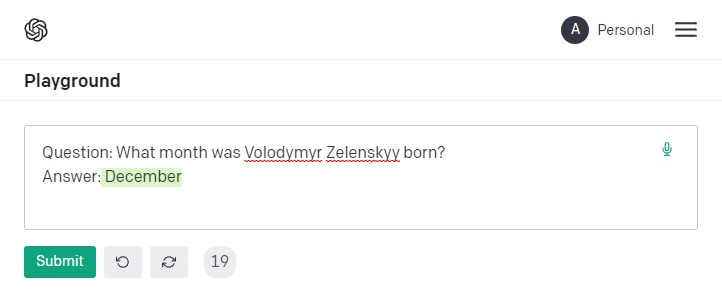

There are other situations where GPT-3 is just flat out wrong. When asked what month Volodymyr Zelenskyy (the current president of Ukraine) was born, GPT-3 confidently and incorrectly declares December. He was actually born in January.2

Why is this? Zelenskyy was not commonly talked about when GPT-3 was first trained, meaning that GPT-3 never assigned any importance to "memorizing" anything about him. Now he is important, but GPT-3 never got the memo.

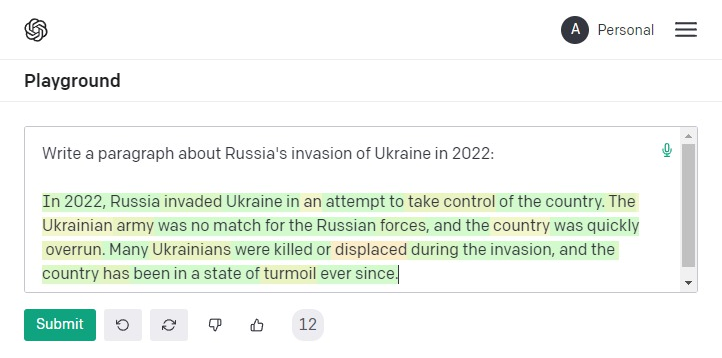

This can reflect itself very dangerously when you have GPT-3 actually write text:

GPT-3 writes text very confidently and is often accurate, but it also is often disastrously inaccurate.

The Downsides of Being Closed Source

Despite the name OpenAI, GPT-3 is one of the few models where the weights and code are completely closed. Except for Microsoft, who is a part owner of OpenAI, nobody else is allowed to modify or study the internals of GPT-3, making it impossible to apply new research techniques.

For many people, this is not a big deal. They are not engineers or researchers. They simply want an easy-to-use API that mostly works, around which they can quickly build a product to sell. (This has led to a flurry of undifferentiated products that are largely clones of each other, all with the same problems and limitations.)

However, to researchers trying to improve the field, this opaqueness is a stop sign that prevents further iteration and improvement. This is in stark contrast to Google and Facebook, who release their work publicly to try to further AI research.

If you are looking for an example of open source versus closed source, look no further than image generation. OpenAi released Dall-E 2 earlier this year. But within months, it was completely overshadowed by Stability.ai, which released a similar model called Stable Diffusion, but open source. Immediately researchers got to work improving and iterating on it, leaving Dall-E 2 in the dust only months after it was released.

The same fate is happening with GPT-3. It is falling further and further behind in industry AI benchmarks3, and its closed-ness prevents the real tweaking that is required to help it catch up.

And for people looking to use AI to create content, there is no way to truly tailor it to writing large amounts of high quality, accurate text. There is a way (by paying OpenAI) to lightly tune the model, but no way to do the level of changes required to turn it from a novelty to something great.

Is There a Better Alternative?

Outside of GPT-3, research into AI content generation has been moving at a brisk pace. Researchers have found various major inefficiencies in how GPT-3 was trained and have managed to create even better language models at smaller sizes.

But, one of the most important evolutions has flown largely under the radar: a language model capable of doing its own research.

The truly great researchers are in the know. Adept.ai, founded by Google researchers who were responsible for inventing transformers (which GPT-3 is a type of), just released a new prototype that is able to use Wikipedia to fully research and answer any question.

LaMDA, the chatbot built by Google that is so good, one of its creators was convinced it was conscious, does its own research in the background to help better answer chat queries.

Our product, Article Forge, uses AI to create high quality, accurate, and helpful long-form articles in a single click - it is an end to end article writer rather than a writing assistant. We are doing the same thing as Adept.ai and LaMDA, but specifically for article creation - and to that end, we have built our own knowledge search engine.

First, An Important Note About Google

A lot of this pioneering work is being done by Google researchers. This should not be surprising - Google is itself a search engine. Their entire business revolves around accurately answering questions from users using the internet.

When you search "who won the last football game" or "Volodymyr Zelenskyy birthdate," Google needs to give you an accurate and up to date answer. Google can't return that Donald Trump is the current US president.

So you can expect when Google returns search results, it is using facts and information it has gathered from all across the internet to verify whether something is accurate and helpful.

(By the way, this is a topic we have discussed at great length with real AI researchers working at Google!)

So if you are generating SEO content with an AI tool that doesn't research, not only will your users realize the information is inaccurate, but Google will as well. And if you are generating content and don't care about SEO - well you probably still want your content to be accurate… right?

What Is a Knowledge Search Engine?

You are probably already familiar with a normal search engine - you enter a query and get back results. Our knowledge search engine is also like that but built specifically for machines.

Instead of taking search queries a user would write, this search engine takes queries that a machine would write. Our AI is able to give this search engine its entire thoughts about what it is writing, how it is planning on writing it, all of the topics it wants to cover, and the search engine will give back results that are geared entirely towards helping it write that content.

The results this search engine returns are also different. Instead of returning websites, it returns key pieces of knowledge in a way easily digestible by machines.

This search engine is almost like if you could hook Google directly up to your brain, where it can read your mind and then upload the facts and knowledge that answer your question directly back into your brain.

This Makes Content More Accurate But Also More Insightful

It is not hard to understand why AI (and Article Forge specifically) would benefit from a search engine built directly for it.

Unlike GPT-3, this allows Article Forge to write with reference material to ensure that what it says is accurate and up to date. It also can judge the quality of the reference to make sure it isn't writing fake news. When it writes something inaccurate, we can trace back the bad information it was given, so it doesn't repeat the same mistake.

But also, there is another big advantage. GPT-3 is a very large language model with a very large "brain," but a huge portion of that "brainpower" is devoted to memorizing things. Since GPT-3 doesn't do outside research, it needs to rely on its own memory - which we showed above is prone to mistakes.

Since our AI is not burdened with this requirement, it is instead able to use its brainpower to work on drawing insights from the data it is given. It can take the portion of its "brain" devoted to memory and instead use it towards "intelligence".

It is very similar to if you were studying for an exam. If it were a closed book exam (you couldn't use any reference material), you would have to spend all of your time memorizing that reference material. If it was an open book exam (you could use whatever reference material you wanted), you could instead use that time to come up with more insightful and useful ways to use that information.

All of these factors combine together in a way that makes Article Forge content more accurate and more useful than content written by GPT-3, or any of the writing assistants powered by it.

We Control Our Destiny

Of course, I'm not going to promise that at the writing of this blog post, the content Article Forge writes is 100% perfect 100% of the time (they are really good though!). You will almost certainly want to proofread the content yourself - but then again, you'd probably do the same thing no matter how you were getting your content.

But what I can promise is that since we have built these models ourselves, we are in control of our own destiny. We aren't reliant on another company for models, and we aren't even reliant on investors. Despite taking no outside funding, we have still grown to be 20 people strong.

Even more importantly, where most companies that use GPT-3 are largely staffed by marketing people, 70% of the company (14 of us at the time of writing) are Machine Learning Engineers or Linguists working directly on improving the quality of our AI.

So we are in control of our research roadmap and can tailor it specifically to solving our users' problems - writing a high quality and factually accurate long-form article in a single click.

And the rate of AI research, both internally and externally, is moving at a breathtaking pace. As these incredible advancements continue, Article Forge will be the first tool to deliver them to end users - no need to wait on a 3rd party to update their API!

So no matter what your opinion is of AI generated content right now, pay close attention to this field. What seems impossible and out of reach now will become commonplace and easy in the blink of an eye.

Footnotes:

- Throughout the article, I will use the terms "model", and "artificial intelligence" interchangeably to refer to large language models. If you aren't sure what a large language model is, I would imagine it as a very powerful AI that has a very big "brain".

- In machine learning terms, confidently saying something that is factually incorrect is called "hallucination" - and the definition makes sense. The model is hallucinating information!

- This is hardly the only benchmark. For further examples, Chinchilla and U-PaLM (both developed by Google) handily beat GPT-3 across a wide variety of tasks.

Revolutionize the way you get content

Start your absolutely risk-free 5-day trial today!

Start my free trial!

So, at the end, which model ArticleForge is using? Can you detail a bit on this ?

We are not using just a single model. If you look around at the various ML leaderboards (some linked to in the post itself), you will see many of the models that we use at the very top. We will, at a later time, write a more technical post where we go into a bit more detail about what we are using!

I am really looking forward to seeing you develop a product that enables users to enter a list of URLs and/or PDFs, which your AI can use for its research. It would be a very cool way to aggregate knowledge drawn from sources the writer knows and trusts.

Thank you for this incredibly interesting article.

That is on our roadmap and should be coming very soon! 🙂

Great explaination! Very helpful.

I can now see how you guys are a cut above and how AF can deliver much better long form with the click of a button. I’m in.

Awesome, we’re excited to have you! 😀

Can you demistify that Article Forge is not just a spinner? People think that it is and they find article forge content to be duplicate.

I can confirm that Article Forge is NOT a spinner in any way.

Article Forge used to be like that all the way back in 2019, but that is ancient history. I think some people may have tried it back then and then incorrectly assumed we never changed or improved.

Our articles are written from scratch using deep learning models – regardless of whether you are using GPT-3 or not, this is the only way to write high-quality text right now.

We continually test our articles on Copyscape, and our articles come back as unique – because they are.